How to Build a Health Score Teams Trust

How to account for segment differences, seasonality, and real customer context without overcomplicating your model.

DEAR STAGE 2: Our “red/yellow/green” customer health score keeps flagging healthy accounts as risky. How do we design health scoring that actually accounts for the multiple segments we serve, context on the account, and the natural seasonality in our industry? ~DROWNING IN EDGE CASES

DEAR DROWNING IN EDGE CASES: I hear you! If your health score keeps crying wolf, your team stops listening. And once that happens, it’s all just noise.

We called on Parker Chase-Corwin, Stage 2 LP and fractional CS leader and advisor who has built CS, support, and services teams from Series A through Fortune 50 to shed some light on this question. His perspective? “Health scores aren’t meant to confirm what you already know. They’re meant to surface the blind spots.” The challenge, of course, is doing that when your customer base isn’t uniform.

Here’s how he recommends tackling this challenge:

Define “healthy” separately before you try to score it

Most health score problems are actually definition problems, especially if you are leveraging AI to help you with diagnostics.

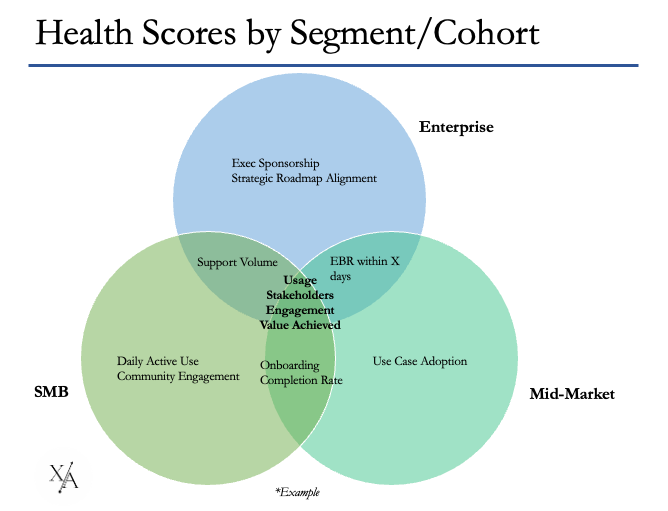

If you serve SMB, mid-market, and enterprise customers, you likely have three different definitions of success (whether you’ve written them down or not). An enterprise account may be perfectly healthy with modest usage but strong executive alignment and a clear roadmap. An SMB account may only be healthy if usage is frequent and consistent. When you force those realities into one uniform red/yellow/green model, you create false positives.

Parker put it well: “You want the smallest number of inputs that reliably point to risk. Not the largest number of signals you can collect.” In practice, if you’ve got a lot of edge cases, that means creating segment-specific scorecards, even if 70% of the inputs are shared. Adjust thresholds by segment. Allow for nuance. Keep the number of signals intentionally small so you can interpret movement over time.

Account for seasonality by shifting the lens, not lowering the bar

Seasonality breaks a lot of early health score models. If you work with construction, education, retail, or any industry with predictable slow periods, your “usage = health” logic will collapse at least once a year.

Instead of letting the model panic every off-season, treat those periods as a different operating mode. When activity is expected to dip, shift what you’re measuring. During slower months, product usage may matter less than planning, responsiveness, stakeholder stability, and readiness for the busy season.

In other words, during hibernation you aren’t measuring “how much they’re doing.” You’re measuring “are we aligned and ready.”

That shift preserves signal integrity without creating noise. It also forces your team to be thoughtful about what really indicates risk versus what’s simply expected behavior.

Protect trust with simplicity and transparency

As your model evolves, resist the urge to throw in 50 signals just because you can. It’s tempting, especially with new tooling and AI layers, but more variables often create more volatility, not more accuracy.

Parker cautioned against over-complication: “When you have too many variables, it’s going to ping-pong all over the place. You want trending you can trust.” If your score is constantly bouncing because minor inputs shift daily, your team won’t know what actually matters or how to take the next best action.

Four principles help maintain trust:

Keep your core signals precise and stable.

Iterate intentionally (quarterly or semi-annually), not impulsively.

Keep the inputs visible so team members can reverse-engineer the score to determine which signals need to be prioritized and addressed.

Allow a “human override” so those employees closest to the customer can adjust based on their unique qualitative context that isn’t showing up in the signals

You can absolutely use AI to mine sentiment or identify anomalies in transcripts and emails, but the foundational rules still need to be understandable and defensible. Especially if you’re presenting health to your board, the model must clearly connect to observable behaviors, not the qualitative “they seem happy.”

A health score people understand is far more valuable than a sophisticated one no one believes.

Design the health score to trigger action

A red account without a defined motion is…nothing.

The most underutilized part of health scoring is intervention. When an account dips, how quickly do you respond? What changes? Who gets involved? One metric I love tracking is time to first intervention after a score change. If you can consistently respond within a defined window, you’ve turned a passive report into an operational lever.

This is also where Stage 2’s concept of a single behavioral anchor (the Leading Indicator of Retention from the Science of Scaling framework) becomes useful. If you can name one core behavior that healthy customers consistently demonstrate within a given timeframe, your model becomes dramatically easier to manage. The health score becomes a system that asks, “Are they building the habit that leads to renewal?” And when that habit breaks, you intervene.

Health scoring at this stage shouldn’t be about perfection. It should help your CSMs prioritize, give leadership a credible read on the base, and surface blind spots early enough to do something about them.

Until next week!